The new graph engine has two types of multithreading: first individual nodes are internally multithreaded, second the graph itself can run nodes and groups of nodes in parallel. It became clear that we needed to make changes in how we set up our character rigs for production. Based on all of the above, this paper presents an add-on for a well-known animation suite that combines the advantages offered by a sketch-based interface and VR to let animators define poses and create virtual character animations in an immersive environment.ĭreamWorks Animation introduced a new parallel graph system, LibEE as the engine for our next generation in-house animation tool. At the same time, Virtual Reality (VR), is becoming commonplace in many domains, and recently started to be recognized as capable to make it easier also the animators’ job, by improving their spatial understanding of the animated scene and providing them with interfaces characterized by higher usability and effectiveness. Among others, sketching represents a valid alternative to traditional interfaces since it can make interactions more expressive and intuitive however, although the literature proposes several solutions leveraging sketch-based interfaces to solve different computer graphics challenges, generally they are not fully integrated in the computer animation pipeline. Hence, researchers continuously experiment with alternative interaction paradigms that could possibly ease the above task. Generating computer animations is a very labor-intensive task, which requires animators to operate with sophisticated interfaces. The system recognizes natural commands (gestures, voice inputs) issued by the performer, extracts scene data from a text description and creates live animations in which pre-recorded character actions can be blended with performer’s motion to increase naturalness. To deal with the above limitations, we propose a multimodal animation system that combines performance- and NLP-based methods. Performance-based methods are difficult to use for creating non-ordinary movements (flips, handstands, etc.) natural interfaces are often used for rough posing, but results need to be later refined automatic techniques still produce poorly realistic animations. Apart from methods based on the traditional Windows-Icons-Menus-Pointer (WIMP) paradigms, solutions devised so far leverage approaches based on motion capture/retargeting (the so-called performance-based approaches), on non-conventional interfaces (voice inputs, sketches, tangible props, etc.), or on natural language processing (NLP) over text descriptions (e.g., to automatically trigger actions from a library). In particular, significant efforts are devoted to create animation systems suited also to non-skilled users, in order to let them benefit from a powerful communication instrument that can improve information sharing in many contexts like product design, education, marketing, etc. Virtual character animation is receiving an ever-growing attention by researchers, who proposed already many tools with the aim to improve the effectiveness of the production process.

Experimental results in different usage scenarios show the benefits offered by the designed interaction strategy with respect to a mouse & keyboard-based interface both for expert and non-expert users.

#Premo animation software download free#The proposed solution exploits both off-the-shelf hardware components (like the Lego Mindstorms EV3 bricks and the Microsoft Kinect, used for building the tangible device and tracking animator's skeleton) and free open-source software (like the Blender animation tool), thus representing an interesting solution also for beginners approaching the world of digital animation for the first time.

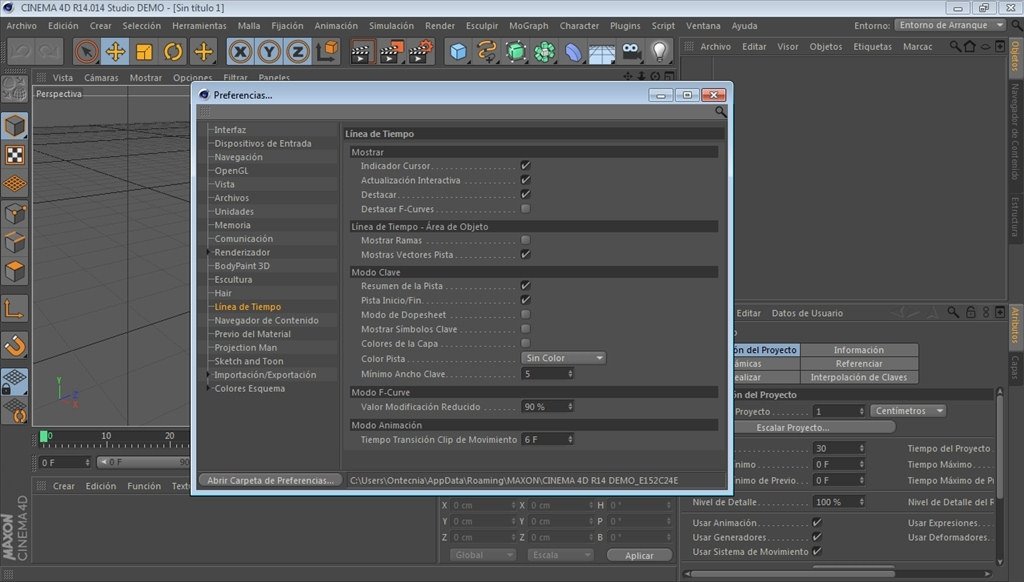

High-level functionalities of the animation software are made accessible via a speech interface, thus letting the user control the animation pipeline via voice commands while focusing on his or her hands and body motion. To this aim, orientations of an instrumented prop are recorded together with animator's motion in the 3D space and used to quickly pose characters in the virtual environment. This paper proposes a tool designed to support computer animation production processes by leveraging the affordances offered by articulated tangible user interfaces and motion capture retargeting solutions. Software for computer animation is generally characterized by a steep learning curve, due to the entanglement of both sophisticated techniques and interaction methods required to control 3D geometries.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed